Linking emotion to decision making through model-based facial expression analysis

Abstract

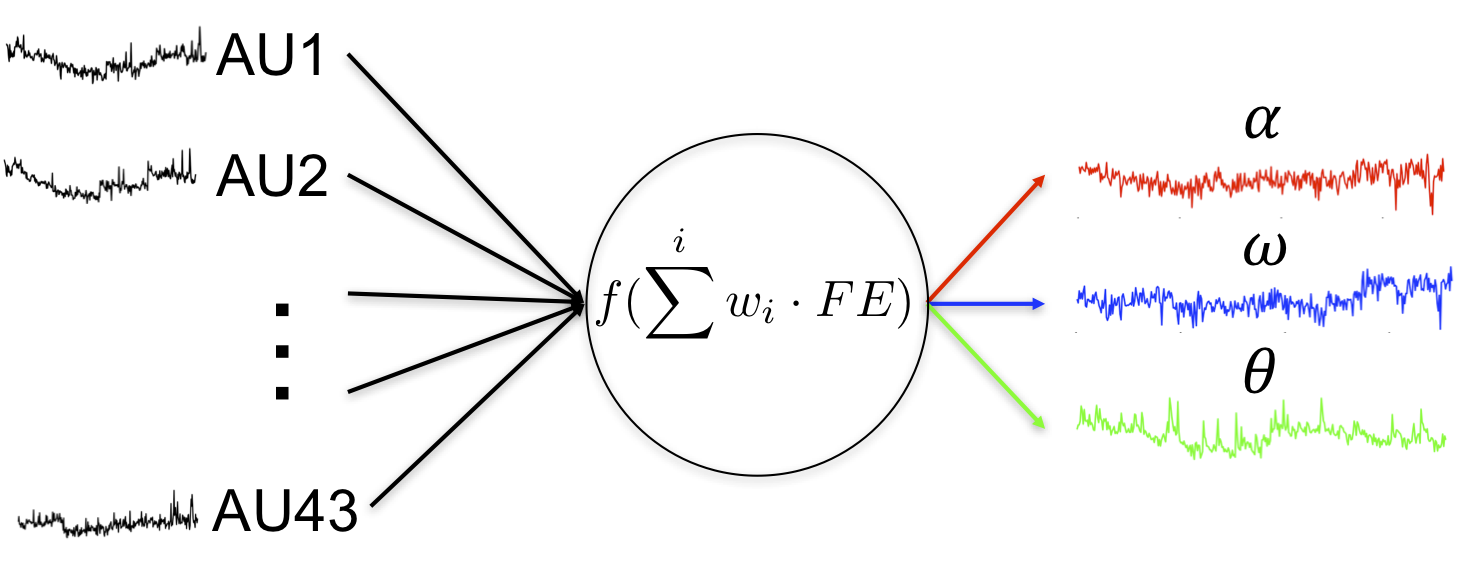

Emotions play a large role in human decision making, yet measuring emotions in real-time and incorporating them into cognitive modeling remains elusive. Growing evidence suggests that facial expressions (FEs) provide an objective measurement of emotions and FEs might provide useful information about people’s underlying states/intentions. While previous studies have investigated general relationships that FEs have with decision making, a formal link between the two remains unknown. Thus, we aimed to identify a cognitive mechanism linking value-based decisions and the FEs expressed during a decision making task. Volunteers (N=30) underwent two-armed bandit tasks, where one choice in each task was associated with a certain reward and the other with a probabilistic reward, the probability of which had to be learned from experience. The payoffs of both chosen and unchosen options were revealed on every trial ('full information'). To measure FEs, we used a computer vision tool that detects the temporal dynamics of twenty Action Units as described by the Facial Action Coding System. To determine the cognitive mechanisms linking choice behavior to FEs, we took a joint modeling approach–we developed a novel reinforcement learning model which incorporated FEs to estimate free parameters of the model. Our results suggest that a model assuming that people maximize subjective emotional expectations, which are jointly estimated by FEs and choice outcomes, provides a better explanation for participants’ choice behavior than other competing models. This work represents a novel model-based FE analysis, and our results shed light on the mechanistic links between 'cognition' and 'emotion'.